AI in Customer Service: Why Automating Simple Tasks Actually Makes the Job Harder

Automating repetitive tasks in customer service is widely presented as a straightforward win: less operational friction, more time for high-value interactions. In theory, that logic holds. In practice, what most organizations failed to anticipate is that removing simple tasks from agents' daily workflows does not free them up. It exposes them continuously to the most complex, emotionally demanding, and exhausting interactions in the queue. This is not a speculative concern about the future of work. It is an operational reality playing out right now in contact centers that have deployed generative AI at scale.

A workday built entirely around complex, high-stakes situations bears no resemblance, in terms of cognitive and emotional load, to one that alternates between routine tasks and challenging conversations. Routine interactions serve as a natural pressure valve, giving agents a chance to reset between difficult cases. When AI removes that valve without replacing it, the result is a continuous stream of high-intensity demands with no recovery window. That is the central paradox of AI-driven CX transformation: by making teams more efficient at volume, organizations have made the remaining work structurally harder.

What Automation Actually Changes for CX Teams

When a chatbot or conversational AI handles Tier 1 requests (order tracking, account updates, FAQs), it intercepts a significant portion of inbound volume before it ever reaches a human agent. The productivity gains are real and measurable. But the residual flow that reaches human teams is, by definition, filtered: it contains only what the AI could not resolve. That means ambiguous situations, emotionally charged complaints, multi-layered problems, and judgment calls the model is not equipped to make.

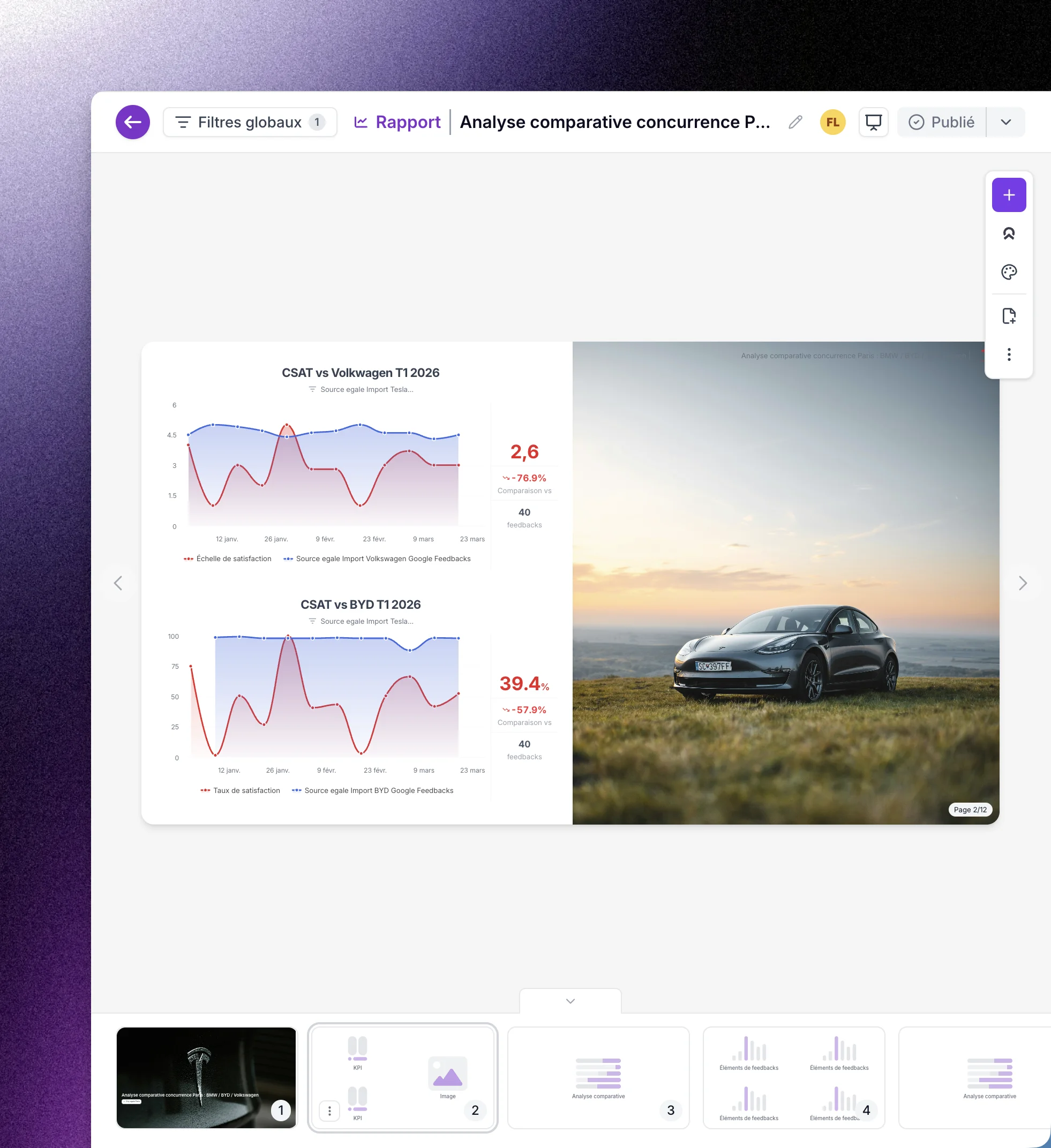

This concentration of complex cases on human agents produces several concrete effects. Emotional load per interaction increases, average handle time grows, and internal escalation rates rise proportionally. Productivity metrics measured in ticket volume deteriorate mechanically, without that deterioration reflecting any drop in team performance. It reflects a fundamental change in the nature of the work. An organization that continues to evaluate its CX teams on volume-based KPIs after deploying AI has misread its own operating model. It is penalizing its best people for a transformation it initiated.

McKinsey has documented this dynamic in its analysis of generative AI's impact on service roles: productivity gains from automation concentrate on routine cognitive tasks, while behavioral skills such as empathy, emotional regulation, and conflict resolution become the critical residual resource, one that AI cannot replicate and that organizations have invested the least in developing.

The Management Blindspot No Dashboard Is Catching

For CX directors and frontline managers, the challenge is twofold. First, the problem is invisible in standard operational dashboards. Emotional overload does not trigger an alert in a workforce management platform. It surfaces with a delay: rising absenteeism, increased turnover, gradual deterioration in interaction quality, and declining satisfaction scores on complex cases, which now represent the majority of the workload. By the time the warning signal appears, the management debt has already compounded.

Second, the standard managerial response, setting objectives, tracking metrics, adjusting procedures, is the wrong tool for this problem. This is not a process issue. It is a psychological safety issue. Teams managing an abnormally high density of difficult interactions need leadership grounded in genuine empathy, explicit recognition of the real weight of their work, and structured space for decompression. Not a new dashboard.

This is precisely where the manager's role is being redefined in the AI era. Giving work meaning is not a metaphor. It is an operational competency. An agent handling a continuous flow of complex complaints without acknowledgment from their manager gradually disengages from the mission. AI has stripped away the variety of their work. What remains is the difficulty. What the manager must restore is the conviction that this difficulty has value, that it is not a symptom of dysfunction but proof that their role is irreplaceable. For a deeper look at how CX team responsibilities are evolving in this context: The Role of CX Teams: Missions, Impact, and Challenges.

Behavioral Skills Are Now a Strategic Business Asset

If automation progressively erodes the value of technical processing skills (data entry, information retrieval, tool navigation), it symmetrically amplifies the value of behavioral competencies: active listening, emotional intelligence, managing uncertainty, and making sound decisions in unprecedented situations. These are not soft skills. They are differential operational capabilities that are difficult to build, impossible to automate, and directly correlated with customer satisfaction on high-stakes interactions.

The paradox is that these competencies have historically been undervalued in CX job frameworks, precisely because they were perceived as innate rather than learned. Organizations hired empathetic profiles; they did not train for empathy. AI forces a revision of that logic. When empathy becomes the critical residual skill, it must be treated accordingly: trained, assessed, reinforced, and compensated in proportion to its operational value.

Leading organizations are already redesigning their learning and development programs to incorporate emotional regulation, high-tension communication, and decision-making in ambiguous situations. Some are drawing on methods from the performing arts, particularly improvisational theater, to build listening, adaptability, and presence. These approaches, long confined to personal development, now have a direct operational application in post-automation CX teams, where the quality of human connection is the only differentiator AI cannot replicate.

What Customer Verbatims Reveal That Metrics Never Will

One of the blind spots in AI-driven CX transformation is measuring the employee experience. Internal listening programs, HR surveys, and engagement tools are often disconnected from the operational signals agents send every day through their customer interactions. An emotionally overloaded agent does not file a ticket with their manager. They slow down imperceptibly, handle requests with less precision, respond with less energy. These weak signals do not appear in a productivity dashboard. They appear in customer verbatims.

Semantic analysis of customer feedback on human agent interactions represents a significantly underutilized source of management intelligence. When a large language model processes thousands of verbatims and detects a progressive deterioration in the emotional tone of interactions within a given team or segment, it generates an early warning signal that neither HR surveys nor operational metrics could surface at the same speed. This is a concrete application of AI in service of workforce management, not just customer experience. For a closer look at how semantic analysis works at industrial scale: Semantic Analysis in CX: How AI is Redefining the Customer Experience.

Rethinking the Governance of CX Automation

The operational lesson of this paradox is that automation cannot be driven solely by volumetric efficiency criteria. Automating a task because it is frequent and repetitive is a rational cost decision. But if that decision structurally increases the load on human teams without corresponding adjustments to working conditions, management practices, and performance frameworks, it produces a partial ROI that masks a real human liability.

Sound governance of CX automation integrates three dimensions simultaneously. The first is residual flow analysis: what remains for human teams after AI has handled what it can? What is the emotional density of that residue? The second is the adaptation of performance indicators: volume metrics must be replaced or supplemented by quality metrics on complex cases that accurately reflect the real value added by human teams. The third is explicit investment in behavioral competencies and psychological safety, which become operational assets on par with technology.

AI is not a threat to CX teams. It is a diagnostic instrument. It reveals what these teams do exceptionally well, what no script or language model will ever replicate: creating an authentic human connection in a genuinely difficult moment. The challenge for organizations is not to resist this transformation. It is to manage its workforce implications with the same rigor they apply to its operational benefits.

Download our complete Voice of Customer Guide to get the most out of your program

Our articles for further exploration

A selection of resources to inform your CX decisions and share the approaches we develop with our clients.